Contributors

Jan Jachnik, Richard A. Newcombe, Andrew J. Davison

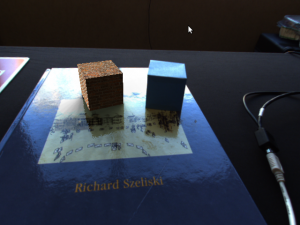

Virtual cube (left) vs real cube (right). Notice how the virtual cube occludes specular lighting effects on the surface of the book.

Abstract

A single hand-held camera provides an easily accessible but potentially extremely powerful setup for augmented reality. Capabilities which previously required expensive and complicated infrastructure have gradually become possible from a live monocular video feed, such as accurate camera tracking and, most recently, dense 3D scene reconstruction. A new frontier is to work towards recovering the reflectance properties of general surfaces and the lighting configuration in a scene without the need for probes, omnidirectional cameras or specialised light-field cameras. Specular lighting phenomena cause effects in a video stream which can lead current tracking and reconstruction algorithms to fail. However, the potential exists to measure and use these effects to estimate deeper physical details about an environment, enabling advanced scene understanding and more convincing AR.

In this paper we present an algorithm for real-time surface light-field capture from a single hand-held camera, which is able to capture dense illumination information for general specular surfaces. Our system incorporates a guidance mechanism to help the user interactively during capture. We then split the light-field into its diffuse and specular components, and show that the specular component can be used for estimation of an environment map. This enables the convincing placement of an augmentation on a specular surface such as a shiny book, with realistic synthesized shadow, reflection and occlusion of specularities as the viewpoint changes. Our method currently works for planar scenes, but the surface light-field representation makes it ideal for future combination with dense 3D reconstruction methods.

Download

Published at ISMAR 2012